Home > Blog > Mixing > Mixing Insights

Audio engineers and producers strive for clarity and fidelity in their recordings and mixes. However, a phenomenon called comb filtering can negatively affect the quality of audio signals, making recordings sound muddled or distorted.

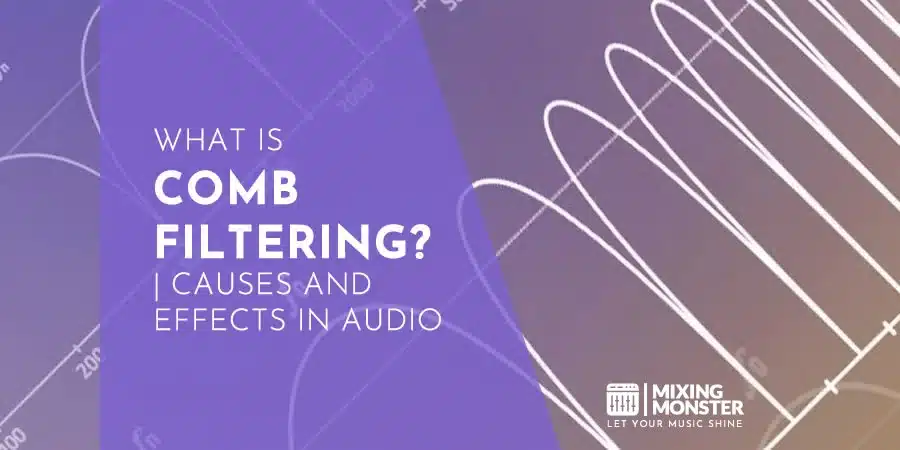

Comb filtering is an audio processing phenomenon that occurs when two or more identical sound waves combine and interfere with each other, resulting in a series of peaks and dips in the frequency response. These peaks and dips are caused by phase cancellations and are known as a comb filter due to their appearance on a frequency response graph, resembling the teeth of a comb.

In this article, we will explore the science behind comb filtering, how it affects audio quality, and techniques for minimizing its impact in audio processing.

Table Of Contents

1. What Is Comb Filtering In Audio Processing?

2. How Comb Filtering Affects Audio Quality

3. Applications Of Comb Filtering In Audio Processing

4. Techniques For Minimizing Comb Filtering In Audio Processing

5. Avoiding Comb Filtering: Best Practices For Microphone Placement

6. Avoiding Comb Filtering: Delay Compensation In Digital Audio Workstations

7. Avoiding Comb Filtering: Correct Equalization Usage

6. Conclusion

1. What Is Comb Filtering In Audio Processing?

Comb filtering is a fascinating audio processing phenomenon. It occurs when two (or more) identical sound waves “overlap” and thus interfere with each other. When these waves combine, they either reinforce or cancel each other out, resulting in a series of peaks and dips in the frequency response of the combined sound wave.

These peaks and dips resemble the teeth of a comb when plotted on a graph, hence the name “comb filtering.”

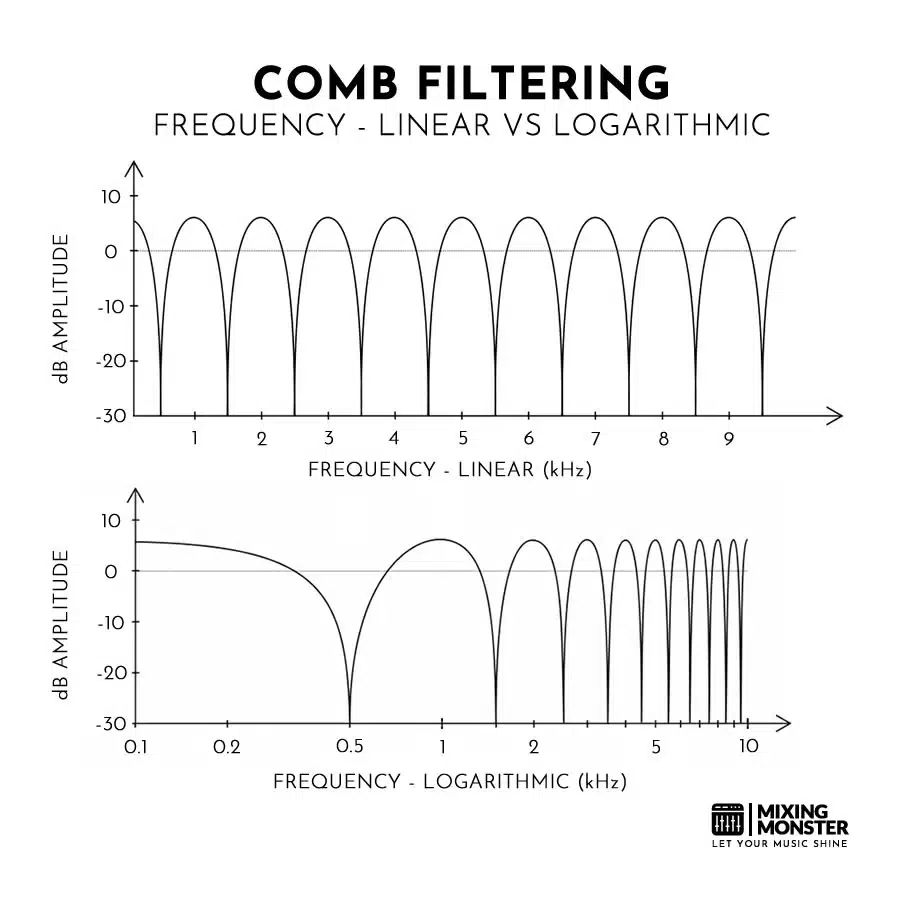

The cause of comb filtering is the phase cancellation that occurs when two identical sound waves are combined. When two sound waves that are 180 degrees out of phase (meaning completely out of phase) are added together, they cancel each other out completely, resulting in silence.

When the two waves are slightly out of phase, they interfere with each other, causing some frequencies to be amplified and others to be attenuated, creating the characteristic peaks and dips of comb filtering. The exact pattern of peaks and dips depends on the frequency and phase relationship between the waves.

Comb filtering can occur in a variety of audio processing situations, including when mixing multiple microphones, using delay effects, or using equalization to boost or cut specific frequencies.

It can be avoided by careful microphone placement, use of delay compensation in digital audio workstations, and avoiding excessive boosting or cutting of specific frequencies in equalization.

2. How Comb Filtering Affects Audio Quality

The effects of comb filtering can be detrimental to the quality and clarity of audio signals. Comb filtering can cause frequency cancellations and distortion, resulting in a hollow or “boxy” sound. The perceived loudness of the audio signal may also be affected, with certain frequencies sounding louder or quieter than others.

How Comb Filtering Can Cause Frequency Cancellation And Distortion:

Comb filtering can cause frequency cancellation and distortion by creating areas of reinforcement and cancellation in the frequency response of the combined sound waves.

When two audio waves are perfectly in phase, they combine to create a wave that is twice as large as either of the original waves. Conversely, like mentioned before, when two waves are perfectly out of phase, they cancel each other out, resulting in silence. These two “extremes” could be seen as the endpoints in which comb filtering can occur.

When two or more waves are slightly out of phase with each other, they combine to create a specific pattern of peaks and dips in the frequency response.

If the peaks and dips occur at the same frequencies in both waves, they will reinforce each other, resulting in a boost in those frequencies. However, if the peaks of one wave align with the dips of the other wave, they will cancel each other out, resulting in a dip in those frequencies.

In the case of comb filtering, the peaks and dips occur in a regular pattern (that resembles the teeth of a comb). This pattern can result in areas of frequency cancellation and distortion, where certain frequencies are either greatly reduced or completely eliminated from the audio signal.

The result is a sound that is hollow, thin, or boxy, with a lack of clarity and definition.

How Comb Filtering Can Affect The Perception Of Sound Quality:

Comb filtering can have a significant impact on the perception of sound quality, often resulting in a sound that is flat, muddy, or phasey. The regular pattern of peaks and dips in the frequency response can cause certain frequencies to be canceled out or greatly reduced, resulting in a lack of clarity and definition in the audio signal.

This effect can be especially noticeable in recorded or mixed audio, where multiple sound sources are combined. If the microphones used to capture the sound are placed too close together or too far apart, they may pick up slightly different sound waves that are out of phase with each other. When these sound waves are combined in the mix, the resulting audio signal may suffer from comb filtering.

The perceived loudness of the audio signal can also be affected by comb filtering, with certain frequencies sounding louder or softer than others. This can make it difficult to achieve a balanced mix, where all instruments and sounds are clearly audible.

In extreme cases, comb filtering can make it difficult to distinguish individual sounds or instruments in a mix, making the overall sound muddy and indistinct. As such, it is important for audio engineers and producers to be aware of the effects of comb filtering and take steps to minimize its impact during recording and mixing.

3. Applications Of Comb Filtering In Audio Processing

Comb filtering can occur in a variety of audio processing scenarios, including recording, mixing, and playback. It is most commonly encountered when multiple microphones are used to capture sound in the same room or space, such as in a live concert or recording session.

For example, if two microphones are used to record a guitar amp, but one microphone is placed closer to the amp than the other, the resulting signals may be slightly out of phase with each other. When these signals are combined in the mix, comb filtering can occur, resulting in a sound that is hollow or boxy.

Comb filtering can also occur in playback scenarios, such as when using a stereo or surround sound system. If the speakers are placed too close together or too far apart, they may create a similar phase relationship that can result in comb filtering.

This effect can be especially noticeable when listening to audio recordings that were mixed in mono, as the comb filtering can cause certain frequencies to be canceled out or greatly reduced.

While comb filtering is generally considered a negative effect in audio processing, it can also be used creatively for sound design in certain contexts, for example:

Creative Applications For Comb Filtering In Audio Processing:

- Adding Texture To A Sound:

By applying a comb filter to a sound, the peaks and dips in the frequency response can create a unique texture or character. This effect can be used to add interest or depth to a sound, especially in electronic music or sound design. - Creating A “Flanging” Effect:

Flanging is a classic audio effect (for example as a guitar effect) that is created by mixing two identical audio signals together, with one signal delayed slightly in time. The resulting comb filtering effect creates a distinctive “swooshing” sound that is often used in psychedelic or experimental music. - Simulating Resonant Filters:

Resonant filters are used to emphasize or attenuate specific frequencies in a sound. By applying a comb filter to a sound, it is possible to simulate the effect of a resonant filter, with certain frequencies being emphasized or attenuated. - Generating Metallic Or Percussive Sounds:

The comb filtering effect can be used to generate metallic or percussive sounds by emphasizing the high-frequency harmonics of a sound. This effect is often used in sound design for video games or film, where unique or futuristic sounds are required.

4. Techniques For Minimizing Comb Filtering In Audio Processing

To avoid comb filtering in audio processing, it is important to carefully consider the placement and phase relationships of microphones, speakers, and other audio equipment. Techniques such as delay compensation, equalization, and careful microphone placement can help to minimize the effects of comb filtering and create a clear, balanced sound.

Comb filtering can be minimized through a variety of techniques in audio processing. These are some of the most effective techniques for minimizing comb filtering:

Techniqus For Minimizing Comb Filtering In Audio Processing:

-

Use A High-Quality Linear-Phase Equalizer:

Linear-phase equalizers are designed to minimize phase distortion in the audio signal, which can help to minimize comb filtering. These types of equalizers are often used in mastering and post-production to achieve a more transparent sound. -

Avoid Using Multiple Microphones:

When recording audio, using multiple microphones can lead to phase cancellation and comb filtering. Whenever possible, try to use a single microphone to capture the sound source. -

Use An All-Pass Filter:

An all-pass filter is a type of filter that can be used to adjust the phase relationship between two audio signals. By carefully adjusting the all-pass filter, it is possible to minimize the effects of comb filtering. -

Adjust The Distance Between The Sound Source And The Microphone:

The distance between the sound source and the microphone can have a significant impact on the phase relationship between the audio signal and the reflected sound waves. By adjusting the distance between the sound source and the microphone, it is possible to minimize comb filtering. -

Adjust The Timing Of The Audio Signal:

In some cases, adjusting the timing of the audio signal can help to minimize comb filtering. This technique is often used in post-production to align multiple audio tracks that were recorded at different times.

Minimizing comb filtering requires careful attention to the phase relationship between audio signals and a good understanding of how sound waves interact with the environment. By using these techniques, it is possible to achieve clear, balanced audio without the negative effects of comb filtering.

5. Avoiding Comb Filtering: Best Practices For Microphone Placement

Proper microphone placement is critical for avoiding phase cancellation and minimizing comb filtering. These are some best practices for microphone placement:

Avoiding Comb Filtering: Best Practices For Microphone Placement:

- Use The 3:1 Rule:

When using multiple microphones to capture a sound source, make sure that the distance between the microphones is at least three times the distance between each microphone and the sound source. This will help to minimize phase cancellation and comb filtering. - Experiment With Microphone Angles:

Changing the angle of a microphone can have a significant impact on the phase relationship between multiple microphones. Try angling the microphones slightly towards or away from each other to find the best placement. - Use A Coincident Pair:

A coincident pair is a type of stereo microphone placement where two microphones are placed at a 90-degree angle to each other. This technique can help to minimize phase cancellation and comb filtering in stereo recordings. - Use A Single Microphone:

In some cases, using a single microphone may be the best option for avoiding phase cancellation and comb filtering. This is especially true when recording a single sound source or instrument. - Use A Low-Cut Filter:

Some microphones have a built-in low-cut filter that can be used to reduce the amount of low-frequency energy that is captured. This can help to minimize phase cancellation and comb filtering in certain recording scenarios.

The key to avoiding phase cancellation and minimizing comb filtering is to be aware of the phase relationship between multiple microphones and experiment with different microphone placements until the best sound is achieved.

6. Avoiding Comb Filtering: Delay Compensation In Digital Audio Workstations

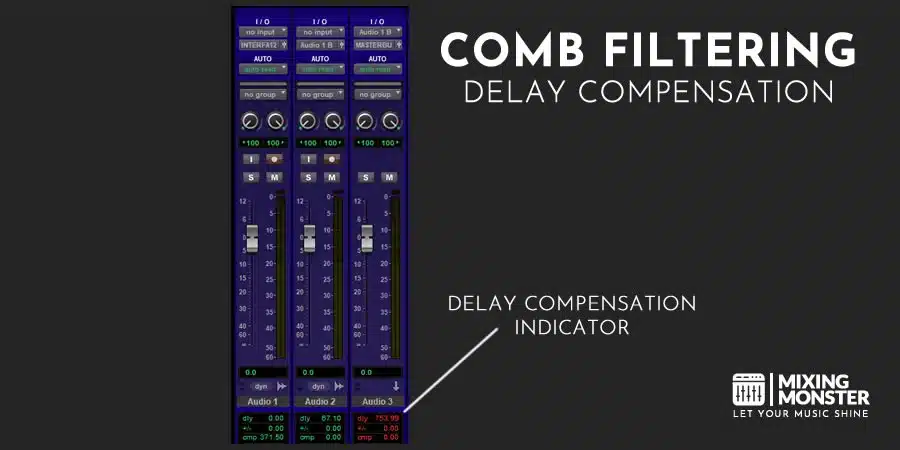

Delay compensation is a technique used in digital audio workstations (DAWs) to minimize comb filtering and phase cancellation.

When multiple audio tracks are recorded or processed through different plugins, there can be variations in the amount of latency or delay introduced by each plugin. These variations can cause the phase relationship between the tracks to shift, resulting in comb filtering and other undesirable effects.

To avoid this, many DAWs include a delay compensation feature that automatically adjusts the timing of each track to ensure that they are in phase with each other. This is accomplished by measuring the amount of latency introduced by each plugin and then delaying the affected tracks by the corresponding amount.

By using delay compensation, it is possible to minimize comb filtering and phase cancellation without requiring manual adjustments to each track. This can save time and improve the overall sound quality of the recording or mix.

However, it is important to note that delay compensation is not a perfect solution and may not work in all situations.

Some plugins may introduce non-linear phase distortion or other types of latency that cannot be compensated for using standard delay compensation techniques. In these cases, it may be necessary to use other techniques, such as manually adjusting the phase relationship between tracks or using different plugins with lower latency.

7. Avoiding Comb Filtering: Correct Equalization Usage

Equalization is a powerful tool for shaping the tonal balance of audio signals, but it can also introduce comb filtering if not used carefully. Here are some techniques for avoiding excessive boosting or cutting of specific frequencies in equalization and minimizing the risk of comb filtering:

Avoiding Comb Filtering: Correct Equalization Usage:

- Use A Narrow Q-Factor:

The Q-factor determines the width of the frequency band affected by the equalizer. A narrow Q-factor will affect a smaller range of frequencies and reduce the risk of boosting or cutting too much in a specific frequency range. - Use Gentle Curves:

When making EQ adjustments, use gentle curves instead of sharp changes. This can help to avoid introducing sudden phase shifts that can cause comb filtering. - Use A Spectrum Analyzer:

A spectrum analyzer can be used to visualize the frequency content of an audio signal and identify areas that need equalization. By using a spectrum analyzer, you can avoid boosting or cutting specific frequencies excessively. - Use Subtractive EQ:

Subtractive EQ involves cutting frequencies that are causing problems rather than boosting the frequencies that you want to emphasize. This can help to avoid excessive boosting that can cause comb filtering. - Use A Linear-Phase Equalizer:

Linear-phase equalizers minimize phase distortion in the audio signal, which can help to avoid comb filtering.

8. Conclusion

In conclusion, comb filtering is a phenomenon that can have a significant impact on the perceived sound quality of audio recordings and mixes. It occurs when there is a phase difference between two or more audio signals, resulting in frequency cancellations and distortions.

While comb filtering can be caused by a variety of factors, including microphone placement, it can also be minimized or avoided through careful equalization, delay compensation, and other techniques.

By understanding the causes and effects of comb filtering and implementing best practices to minimize it, audio professionals can ensure that their recordings and mixes sound as clear and natural as possible.