Home > Blog > Editing > Editing Insights

Affiliate Disclaimer: We may earn a commission if you purchase through our links

Audio waveforms are the backbone of sound editing and engineering. They visually represent sound by displaying changes in amplitude and frequency, which is crucial for understanding how sound behaves in different environments. This intricate representation allows audio editors to precisely manipulate sound, tailoring it to specific needs and contexts.

Audio waveforms are fundamental in audio editing and engineering. Their visual representation of sound – depicting amplitude and frequency variations – is a critical tool for audio professionals. Understanding and manipulating audio waveforms is essential for creating high-quality audio content.

The exploration of audio waveforms reveals various possibilities for sound manipulation. As we delve deeper, we uncover the concept and science behind these fascinating sound signatures.

Table Of Contents

1. The Fundamentals Of Audio Waveforms

2. Physics Behind Audio Waveforms

3. Types Of Audio Waveforms

4. Acoustics And Audio Waveforms

5. Waveforms In Analog And Digital Audio

6. Editing Techniques For Audio Waveforms

7. Audio Waveforms In Mixing And Mastering

8. Audio Waveforms: Key Takeaways And Future Directions

9. FAQ

1. The Fundamentals Of Audio Waveforms

Audio waveforms are the visual representation of sound and a fundamental aspect of audio editing and engineering. This section explores the basics of sound waves, their properties, and how they are represented graphically.

Understanding Sound Waves

Sound is a mechanical wave that oscillates pressure transmitted through a solid, liquid, or gas, composed of frequencies within the hearing range. It’s a waveform that travels through air or other mediums and can be heard when it reaches a person’s or animal’s ear.

The Role Of Amplitude And Frequency

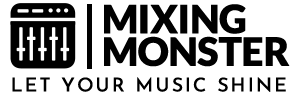

Amplitude and frequency are crucial characteristics of sound waves.

Amplitude refers to the height of the wave and is perceived as loudness, while frequency, measured in Hertz (Hz), refers to the number of cycles the wave completes in a second, determining the pitch of the sound.

Visualizing Sound: An Introduction To Waveform Graphics

Waveform graphics provide a visual representation of precisely these sound characteristics. They illustrate the amplitude and frequency of sound waves over time, allowing audio engineers to analyze and manipulate sound in a more tangible form.

2. Physics Behind Audio Waveforms

Delving deeper into the science of sound, this section examines the physical principles governing audio waveforms. Understanding these concepts is vital for any audio professional seeking to master the craft of sound editing and engineering.

Sound Wave Propagation

Sound waves propagate through various mediums (like air, water, and solids) as vibrations. These vibrations create longitudinal waves characterized by compressions and rarefactions, allowing the sound to travel over distances.

The speed of sound varies depending on the medium, affecting how the waveform is perceived.

The Relationship Between Frequency And Pitch

Frequency, a fundamental aspect of sound waves, directly affects the pitch of our sound. Higher frequencies result in higher pitches, while lower frequencies lead to lower pitches. This relationship is pivotal in music and audio editing, as it determines the tonal quality of the sound.

Harmonics And Overtones Explained

Harmonics and overtones are essential elements in the timbre or color of sound. A fundamental frequency produces harmonics, which are multiples of this original frequency.

Overtones, which can be harmonic or inharmonic, add richness and complexity to the sound, playing a crucial role in distinguishing different sounds and instruments.

3. Types Of Audio Waveforms

An essential aspect of understanding audio waveforms involves recognizing the different types of waveforms and their unique characteristics. This knowledge is particularly crucial in synthesis and sound design, where the choice of waveform significantly influences the texture and quality of the sound produced.

Sine Waveforms

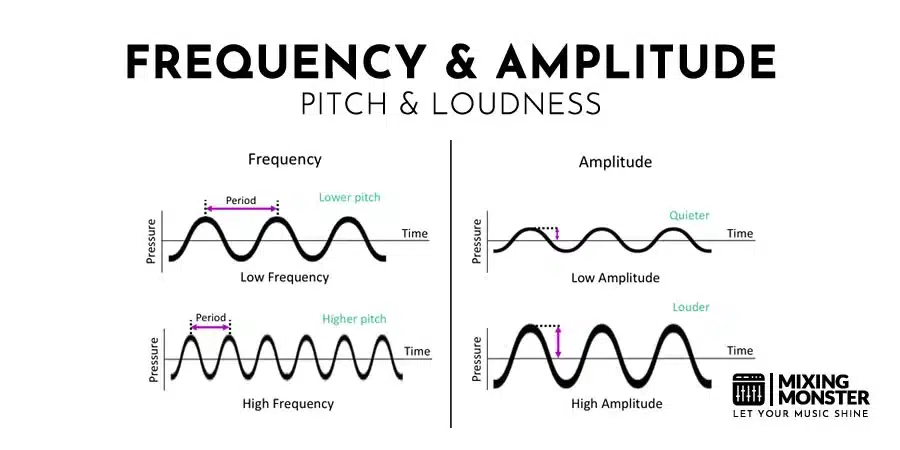

Sine waves are the simplest and most fundamental type of waveform. They represent a single frequency without harmonics, producing a clear tone.

Sine waves are often used in sound synthesis as the building blocks for more complex sounds due to their fundamental nature.

Square Waveforms

Square waveforms have a distinctive on-off pattern that creates a rich, buzzy tone full of harmonics. They are more complex than sine waves and are characterized by their sharp and hollow sound.

Square waves are commonly used in electronic and chiptune music for their unique timbral qualities.

Triangle Waveforms

Triangle waveforms strike a balance between sine and square waves. They have a softer sound than square waves but are richer in harmonics than sine waves.

This makes them helpful in creating more mellow yet harmonically interesting sounds in synthesis.

Sawtooth Waveforms

Sawtooth waveforms are known for their bright and edgy sound. They contain all fundamental frequency harmonics, giving them a full and brassy quality.

Sawtooth waves are widely used in various forms of electronic music, particularly for lead and bass sounds, due to their bold and prominent character.

Comparison Of Waveform Types

| Waveform Type | Harmonic Content | Sound Quality | Common Uses |

| Sine | None | Pure, clear | Fundamental tones, equipment testing |

| Square | Odd harmonics | Sharp, hollow | Electronic music, chiptunes |

| Triangle | Odd harmonics | Mellow, balanced | Retro and video game music |

| Sawtooth | All harmonics | Bright, brassy | Lead and bass in electronic music |

Understanding these basic waveform types and their sonic qualities is crucial for anyone engaged in sound design and audio editing. Each waveform brings a unique flavor to the sound palette, allowing various creative possibilities in music production and audio engineering.

4. Acoustics And Audio Waveforms

In this section, we explore the interaction between audio waveforms and their environments, focusing on acoustics, which significantly impact how sound is perceived and manipulated in audio engineering.

Room Acoustics And Waveform Behavior

Room acoustics play a vital role in how sound waves behave. A room’s size, shape, and materials can significantly affect sound waves’ absorption, reflection, and diffusion, altering waveform representations.

Understanding these acoustic properties is essential for optimizing recording and playback environments.

The Impact Of Materials On Sound Waves

Different materials have varying effects on sound waves.

Hard surfaces like concrete and glass reflect sound, creating echoes and reverberations, while softer materials like foam and carpet absorb sound, reducing echoes.

These material characteristics must be considered when setting up a recording studio or optimizing a listening space.

Understanding Echoes And Reverberations

Echoes and reverberations result from the interaction of sound waves with their environment.

Echoes are reflections of sound arriving at the listener’s ear after a delay, while reverberations are the persistence of sound in a space after the original sound has stopped.

Both can be creatively used or managed in audio editing and room acoustics design.

5. Waveforms In Analog And Digital Audio

This section contrasts the characteristics of analog and digital audio waveforms, explaining how each impacts the recording and editing process. It also delves into the technical aspects of digital audio, including sampling rate, bit depth, and the role of Digital Audio Workstations (DAWs).

Analog Vs. Digital Waveforms

Analog audio waveforms are continuous, representing sound waves as they are, with all their nuances. In contrast, digital audio waveforms are discrete representations, sampling the sound wave at regular intervals.

This fundamental difference affects audio recordings’ quality, editing, and storage.

Sampling Rate And Bit Depth Explained

The sampling rate, measured in Hertz (Hz), refers to how often the sound is sampled per second in a digital recording. On the other hand, bit depth determines each sample’s resolution.

Higher sampling rates and bit depths lead to more accurate representations of the original sound but require more storage space.

If you want to learn more about sample rate and bit depth, here you go:

Transients In Audio Waveforms Explained

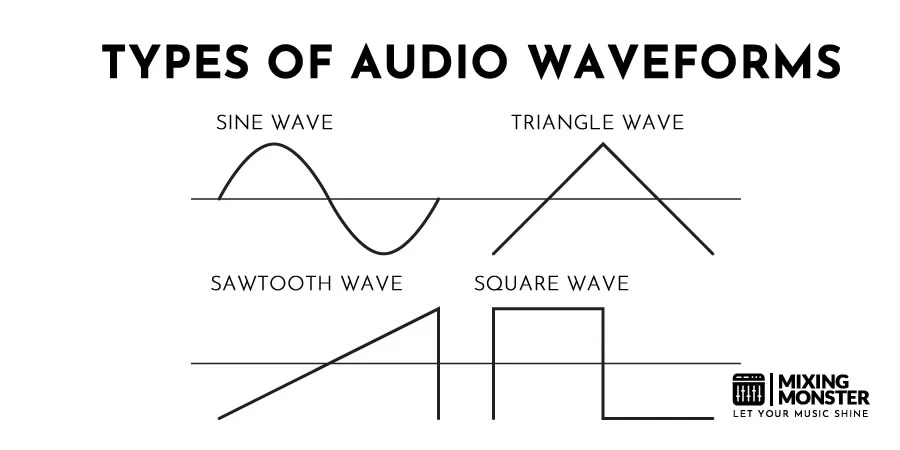

Transients are short, high-energy bursts of sound that occur at the beginning of a waveform, like the strike of a drum or the pluck of a guitar string.

In digital audio, capturing these transients accurately is crucial for a realistic sound representation.

Learn more about audio transients here:

The Role Of Digital Audio Workstations (DAWs)

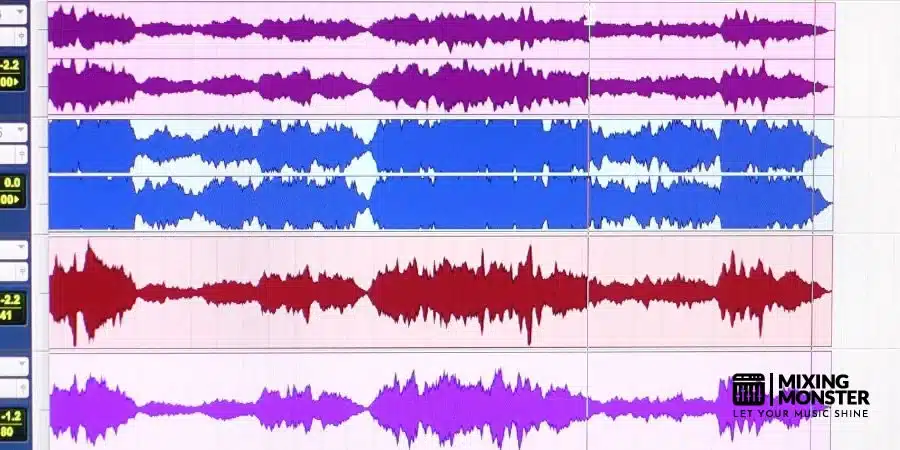

DAWs are integral to modern audio editing, providing tools to manipulate digital waveforms.

They allow for precise editing, effects application, and mixing, making them indispensable in audio production.

6. Editing Techniques For Audio Waveforms

This section is dedicated to the practical aspects of manipulating audio waveforms in editing. It covers a range of basic to advanced techniques suitable for novice and experienced audio editors.

Basic Audio Waveform Manipulation Techniques

Fundamental techniques include trimming, fading, and adjusting gain. Trimming alters the length of an audio clip, fading helps in the smooth transition of sound, and gain adjustment controls the waveform’s amplitude. These are the starting points for any audio editing project.

Advanced Editing: Compression And Equalization

Compression reduces the dynamic range of an audio signal, making loud and quiet sounds quieter, which can help achieve a balanced mix.

Equalization (EQ) allows the editor to boost or cut specific frequencies, shaping the tonal balance of the audio.

Restoring And Enhancing Audio Quality

Audio restoration involves removing unwanted noises like hisses, clicks, or hums and can be particularly challenging.

Enhancing audio quality might involve:

- Techniques like stereo widening.

- Adding reverb.

- Using harmonic exciters to add richness and depth to the sound.

Common Mistakes In Audio Waveform Editing

It’s essential to be aware of common pitfalls in audio editing, such as over-compression, excessive EQ, or ignoring phase issues. Understanding these mistakes helps in avoiding them and achieving a more professional result.

Quick Tips For Effective Audio Waveform Editing

- Start With Clean Audio

Ensure your recordings are as clean as possible before editing. - Monitor Levels Carefully

Keep an eye on your levels to avoid clipping. - Use EQ Sparingly

Equalize gently; avoid overdoing it. - Compress With Caution

Use compression to balance levels, but don’t overcompress. - Listen On Different Systems

Check your edits on various audio systems for consistency. - Save Originals

Always keep a copy of the original files before making edits. - Take Breaks

Regular breaks help maintain a fresh perspective. Do it! - Trust Your Ears

Your ears are your best tool; if it sounds right, it usually is.

7. Audio Waveforms In Mixing And Mastering

This section focuses on managing audio waveforms in the critical mixing and mastering stages, emphasizing how waveform analysis can enhance these processes.

Analyzing Tracks Using Waveforms

In mixing, visual analysis of waveforms can be as critical as listening. It helps identify issues like clipping, phase problems, and timing discrepancies. Waveform analysis is essential to ensuring tracks are well-aligned and dynamically balanced.

Balancing Tracks Using Waveforms

Balancing tracks involves adjusting levels, panning, and dynamics to create a harmonious mix. Waveforms provide a visual guide to these elements, helping to achieve a balanced and cohesive sound.

For example, ensuring that waveforms of different instruments complement rather than compete.

Utilizing Waveforms For Stereo Imaging

Stereo imaging involves the placement of sounds within the stereo field to create a sense of width and depth. Waveforms can indicate stereo spread and help make informed decisions about panning and stereo enhancement techniques.

8. Audio Waveforms: Key Takeaways And Future Directions

As we conclude our deep dive into audio waveforms, it’s clear that these fundamental sound elements are more than just technicalities—they are the art and science of audio engineering personified.

The Essence Of Audio Waveforms

Reflecting on our journey through the intricacies of audio waveforms, we recognize their pivotal role in shaping the sounds that define our experiences.

From the pure tones of sine waves to the complex harmonies of sawtooth waves, understanding waveforms is akin to understanding the language of sound itself.

Innovations On The Horizon

The field of audio engineering is continually evolving, with new technologies and methodologies emerging regularly.

Innovations like AI-assisted editing, advanced digital synthesis, and immersive audio formats are set to revolutionize how we interact with waveforms, offering even more precision and creative freedom.

A Parting Note: The Art Of Listening

Above all, the most crucial skill in our toolkit remains our ability to listen—not just to the sound itself, but to the story it tells through its waveform.

As we ride the ever-changing audio wave, remember that at the heart of every great sound is an even more excellent listener.

9. FAQ

- What Is The Most Important Aspect Of A Waveform For Audio Editing?

The most critical aspect of a waveform for audio editing is its amplitude, which indicates the loudness of the sound. Understanding amplitude is vital to managing levels, avoiding clipping, and achieving a balanced mix. - How Do Different Genres Of Music Affect Waveform Editing?

Different music genres have unique waveform characteristics. For instance, electronic music might display more consistent waveforms due to synthesized sounds, whereas acoustic genres show more dynamic variations. Editors must adapt their techniques to these genre-specific waveforms for optimal results. - What Are Common Mistakes Made By Beginners In Waveform Editing?

Common mistakes include over-compression (leading to loss of dynamics), excessive equalization (altering the natural tone excessively), and ignoring phase issues (resulting in sound cancellations). Beginners should focus on subtle enhancements and preserve the natural characteristics of the sound. - Can Waveform Editing Significantly Improve Sound Quality?

Yes, skilled waveform editing can drastically improve sound quality. It involves balancing levels, removing noise, enhancing clarity, and correcting timing issues, all contributing to a more polished and professional audio output. - What Are Amplitude And Frequency In Audio Waveforms?

Amplitude in audio waveforms represents the strength or loudness of the sound signal. Higher amplitude means louder sound. Frequency refers to the rate at which the waveform repeats itself within a second, determining the pitch of the sound. Higher frequency results in a higher pitch.